Deploy Whisperers from the UI with Spider Controllers

The killer feature that will make me change current training material and a big part of the documentation! 😲

Context

Currently, to launch a Whisperer in Kubernetes, you need to configure it as a sidecar in the Pod definition of your workload.

This works well, but requires a manual action, which we all tend to delay. As that's not the easiest and quickest solution to go to!

To improve and increase Spider usage, it has been a while that I wanted te be able to spawn Whisperers from the UI.

Crazy dream, isn't it?

Well, no, with Kubernetes API, this must be achievable.

And I found a solution using ephemeral containers!

Solution

An ephemeral container is a debug container that you can spawn INSIDE a running Pod, without restarting it.

It gains access to the Pod Linux namespace: process, network etc. Even more integrated than for 2 containers running in the same Pod.

The documentation on it is quite sparse, and the official Node.js sdk documentation not up-to-date, but I managed to POC it, and it worked 😍!

A Whisperer can be launched as an ephemeral container inside another Pod and capture its communications.

However, then, it is missing a few things:

- As you don't change spec, the ephemeral container is associated to a Pod, not all Pods of the replicaset

- As it is ephemeral, it does not restart when the Pod changes spec or moves to another node

- The host Pods do not have the required service account to know the Pods name from their IPs

- No way to add HostAliases

- No way to add configMap or secrets dedicated to it

- No way to have a specific imagePullSecret to get the image from a private repository

I found solutions for all these drawbacks. Because the pros are so awesome, it was worth it 😀

- To spawn ephemeral containers, I needed a specific service to do it

- The service would watch all K8s objects to list targets and know their changes

- So it would manage associating whisperers to all replicas, and also at Pod restarts

- This service would act as a DNS proxy for the whisperers to resolve Pods Ips

- It would also resolve other Ips and avoid the issue with missing HostAliases

- And finally, I would make Whisperer container images public, not to have to bother with pull secrets

It all worked out. 😁

And so well that I went further, implementing namespaces colored lists, time to live option and so on 💪

Here comes the first release of Spider K8s Controllers ! 🎉

How does it work?

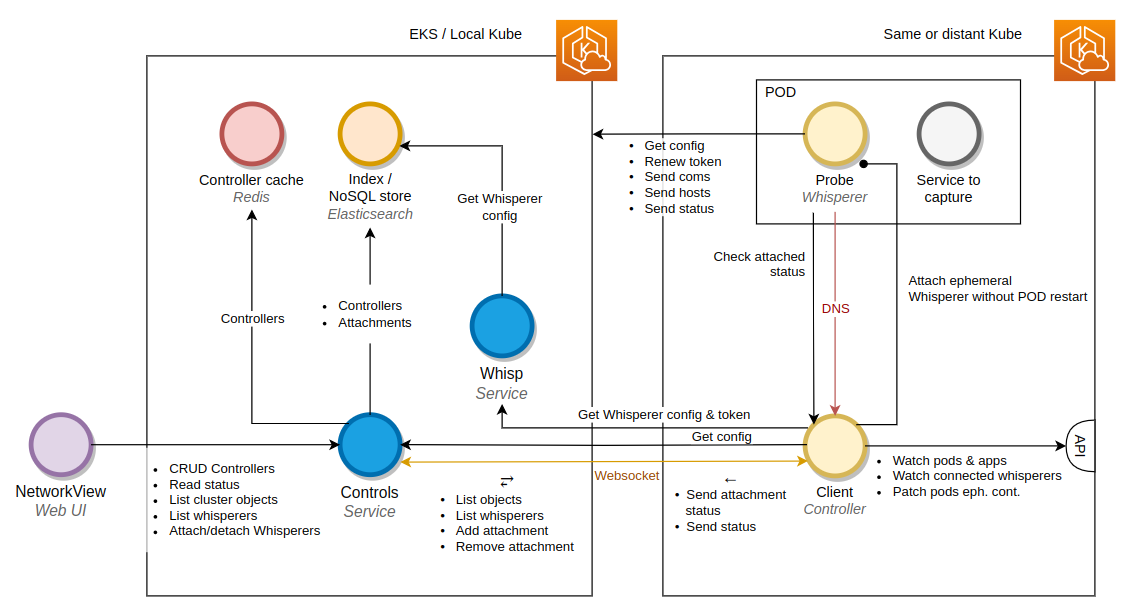

Architecture

Description

-

A Controller client

- Launched in ANY (yes ANY) Kubernetes cluster

- Connects to Spider backoffice by websocket

- Watch K8s worloads

- Receives 'attachments' from Spider to spawn Whisperers on Workloads:

- Deployments

- Statefulsets

- Cronjobs

- Pods

- Spawns Whisperers on K8s workload events

- Checks the desired state to current state to fix any difference at regular interval

- Receives 'detachments' requests to inform Whisperers to stop

- Acts as a DNS proxy for all Whisperers launched

-

A Controls service

- Act as CRUD for Controllers

- Act as a proxy to send commands and configuration to connected Controllers

- Allow fetching current K8s state

- Allow having many Controllers from different clusters connecting to Spider

- Control access rights of users

I had to enhance sightly the way Whisperers where managing authentication to Spider. Since the Controller could not have

access to the private part of the keypair...

But it's a success!

Let's see the UI changes now.

How to (UI)

The UI went through many changes to integrate Controllers and Attachments features.

Concepts:

- New Controller, aside Teams and Whisperers: the point of contact to attach a Whisperer to a remote workload.

- New Attachment: the configuration of a requested attachment of a Whisperer to a workload.

- The attachment can be detached when need is over.

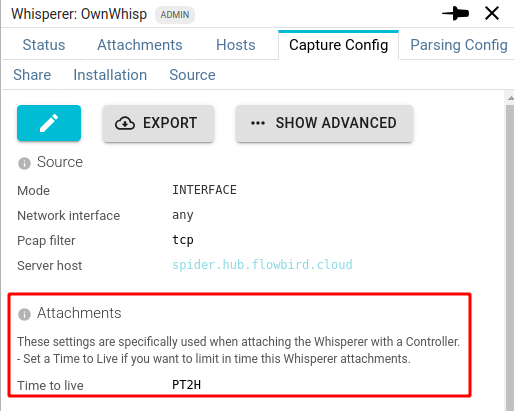

- The attachment of a Whisperer may be limited in time, depending on the Whisperer configuration. This is a safety constraint not to ignore to detach them.

Controllers

Controller are accessible, created and managed from the menu, just aside the teams:

- On the left their status: connected or not

- On the right, access to the attachments

Attachment

Asking for an attachment

You can ask for an attachment from various places:

- From the Floating Action button on the top left

By clicking on the blue link icon.

- From the Controller details

- From the Whisperer details

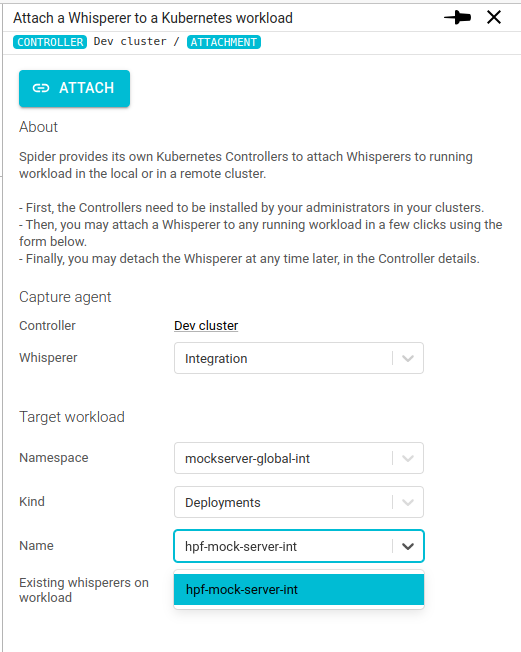

Configuring the attachment

Clicking on the Attach buttons above leads to this form:

On it, you may select the Workload on which to attach a Whisperer.

Click ATTACH and it is done! 😄

If the Whisperer has a limited timeToLive configured in its configuration:

Then the attachment will have a limited time life before automatic detachment:

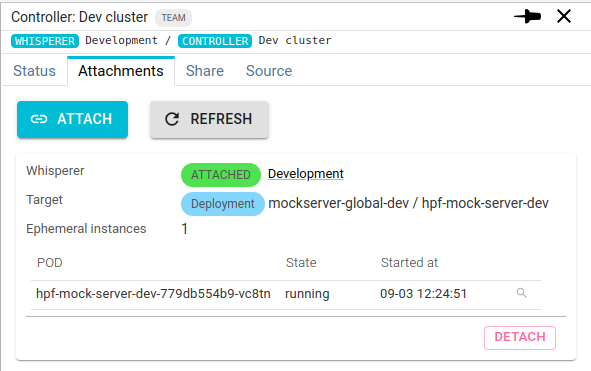

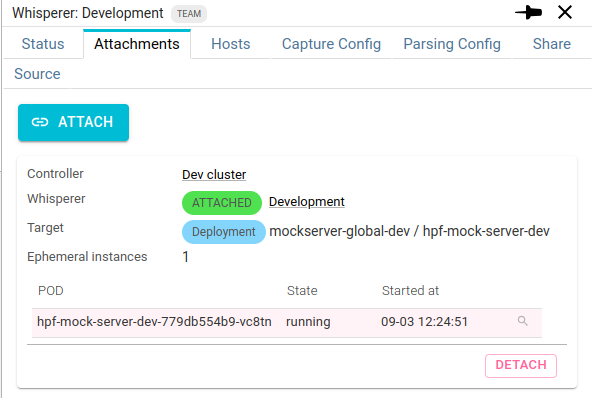

Detachment

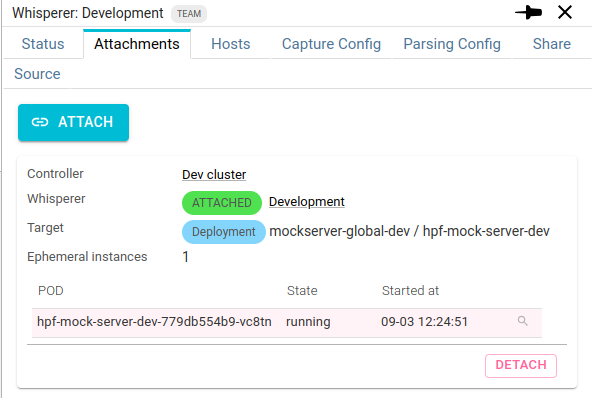

The active attachment are displayed in the Attachments tabs.

From there you see:

- The Controller

- The Whisperer

- The target workload and is attachment status

- The list of all Pods linked to this workload that have been attached

And you may detach an attachment.

This will ask the Whisperer to stop, independently of the Whisperer capture configuration.

Thus you may spawn a Whisperer for a limited amount of time.

Then what?

Nothing!

Data flows as a regular Whisperer.

You may filter on this specific Whisperer instance by clicking on the filter icon in the attachment description.

Monitoring Controllers

Spider Monitoring UI has not been updated yet with specific metrics for Controllers.

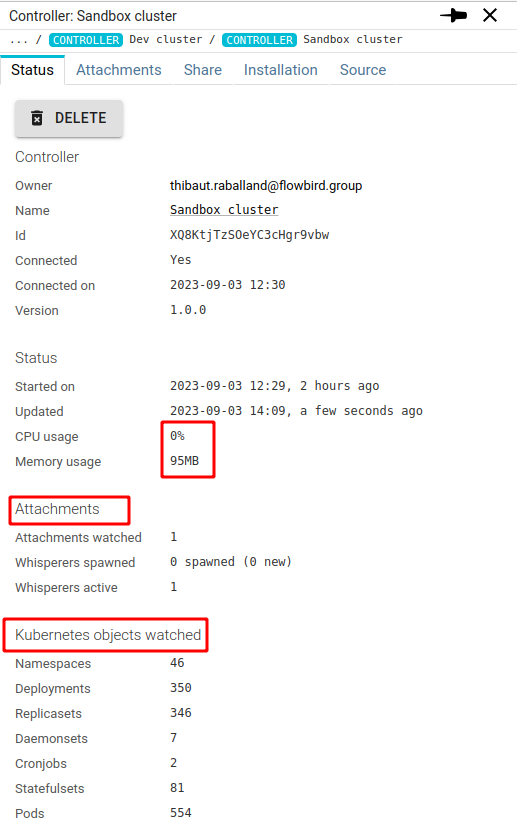

However, current Controller status is brought to the server:

You can access there, from top to bottom:

- The Controller version

- Its resource usage (quite low, as usual 😉 )

- The Attachments under its responsibility, and the linked Whisperers spawned

- The count of Kubernetes objects watched by the Controller.

Access rights

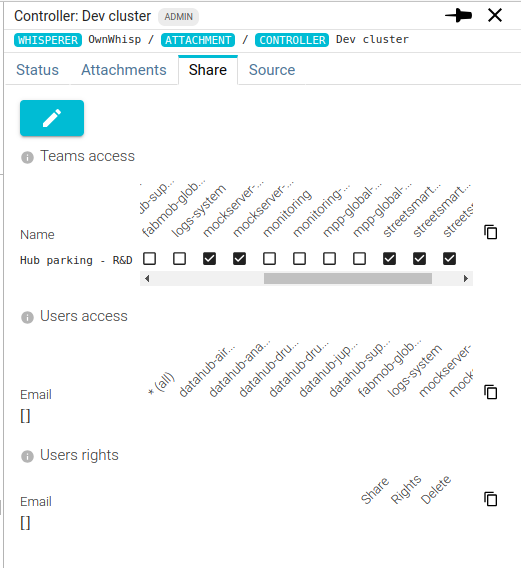

As for all other Spider resources, you need specific rights to access Controllers.

The Share tab allows Controllers administrators to share it with teams or individual users. In a consistent manner as other Spider resources.

The Controller can be limited to a subset of namespaces for each team and users.

You surely not have the right to see all applications of the cluster!

Don't use Spider as a Trojan horse 😅

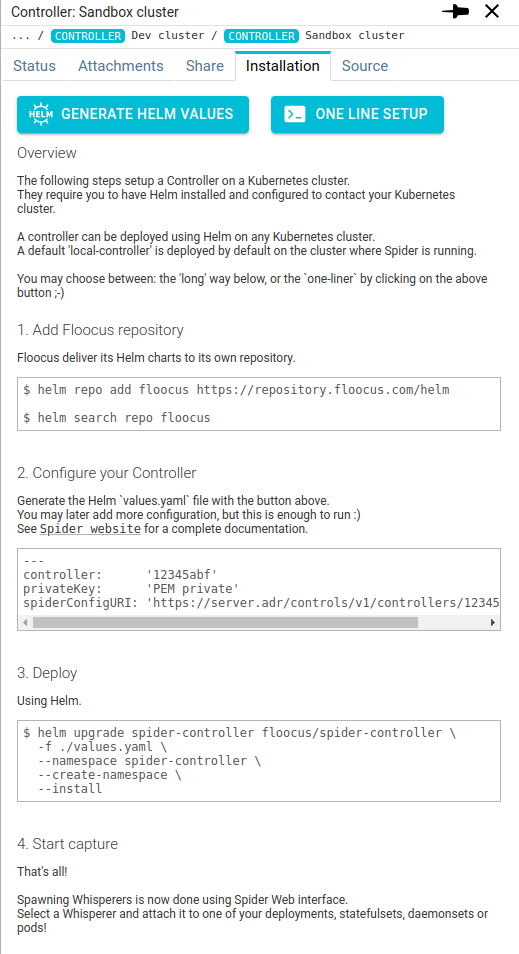

Installing Controllers

I worked a good share to make Controller installation as quick and easy as possible.

And I don't see how to do shorter! There is a One line setup that may be generated on the UI 😲!

Or may also generate the Helm values.yaml file from the interface.

I can't make it easier! 😘

The installation can also limit the namespaces seen by the Controller. See the installation instructions.

Use cases

What use cases I see for these brand new Controllers?

- Capture external software without hacking in their configuration

- Quick capture without modifying your applications

- Attach existing Whisperers configuration (sidecars) to temporary services

- Dedicate low-level capture whisperers to attach on need (debug)

We could define a limited time Whisperer saving low level communications, like Redis, PostgreSQL or Elasticsearch communications.

And allow developers to attach it to any workload to debug 'strange' behaviors! 👍

What's next?

I think sidecars are still interesting (IaC), but I may soon deprecate this by implementing Spider own Custom Resources Definitions (CRD) to add attachments to workload...

Wait and see.

Side effects

You now have the possibility to remove the existing service accounts from Pods with sidecars Whisperers when a Controller is accessible.

Indeed, it may use the Controller as a DNS proxy even if it wasn't spawned by it!

You won't have to remember to upgrade your whisperers versions ;)

They will be upgraded together with the system version!

Upgrade your previous Whisperers version and params to use the Controllers!

Cheers,

Thibaut